Last term in the Ph.D. program at Oklahoma State University we spent an amazing day with Dr. Deborah Rupp, the William C. Byham Chair of Industrial/Organizational Psychology at Purdue University. Dr. Rupp is a researcher and expert in the area of Organizational Justice. If you look at her Curriculum Vitae online it is amazing the body of work that she has already produced.

When we read some of the academic papers around her area of expertise the title of ‘Organizational Justice’ really threw me. Slightly switching the words to ‘Organizational Fairness’ helps clarify a little but still not obvious. What Organizational Justice research addresses is the area around fairness in the workplace, organizational ethics, and high performance work systems.

What was most interesting about the readings and her area of research is that it provides a framework that any manager could (should?) use in processing and communicating decisions that may have an impact on organizational emotions, morale, and/or commitment levels. Internally these are decisions such as organization changes, promotions, raises, project assignments, etc. Externally these are decisions such as partnership choices or business interactions.

Using the framework may provide two benefits; 1) it allows the manager to ensure that the decision is appropriate and fair before the decision is communicated, and 2) it clarifies for all parties affected the critical contributors to the decision. In short, it’s a way to ensure that tough, potentially challenging decisions are fairly made and communicated.

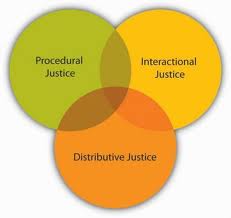

So, what is the framework? It’s made up of three specific components. For the sake of translating this to business application I have generalized the descriptions – more detail and the empirical data that supports these three are available for anyone that wants to churn through the academic articles:

1) Distributive Justice – is the decision appropriate and fair regarding the decisions’ outcomes and distribution of resources? The outcomes or resources may be tangible (e.g., promotions, pay) as well as intangible (e.g., social recognition, praise). A manager needs to step back and ask, “will the organization view this as a fair decision”.

2) Procedural Justice – Was the process used to make the decision fair? In order for decisions to be accepted as fairly arrived at it is critical to ensure that the decision followed a process that the organization (internal or external) perceive as ‘fair’. In many cases this may mean taking additional steps that the manager may think are unnecessary but may be critical to ensuring a fair process. For example, soliciting additional feedback on a potential promotion may be seen as unnecessary but may provide further evidence of the appropriateness (or inappropriateness) of the decision.

3) Interactional Justice – Was the decision communicated fairly and with respect for all parties? This component is made up of two key areas which are related; interpersonal fairness and informational fairness. To be precise, Interactional Justice states that decisions must take into account the interpersonal response and must be communicated in a way that is fair and clear. For example, communicating a potential promotion to part of a team would NOT be seen as fair – from both an interpersonal standpoint (those not in consideration will be upset) and an informational standpoint (those not in ‘the know’ will be upset). Ensuring that decisions are communicated with an intent to be fair and sensitive to the personal impacts to individuals may positively impact the organizational perception of fairness.

This framework provides an excellent tool for thinking through decisions that may impact the perception of fairness of a decision. It may also provide a checklist to ensure that decisions that have difficult potential outcomes are well thought out.

This framework provides an excellent tool for thinking through decisions that may impact the perception of fairness of a decision. It may also provide a checklist to ensure that decisions that have difficult potential outcomes are well thought out.

Dr. Rupp’s work is incredibly important for managers and executives. Many managers are able to make good decisions but may not be good at communicating and implementing them. How many times are ‘correct’ decisions negatively impacted by poor implementation or communication of the decisions? Her work provides useful, implementable concepts that should improve decision implementation in organizations.

Rupp, D.E., Baldwin, A., & Bashshur, M (2006). Using developmental assessment centers to foster workplace fairness. The Psychologist Manager Journal, 9(2), 145-170

Rupp, D.E. (2010). An employee-centered model of organizational justice and social responsibility. Organizational Psychology Review, 1(1) 72-94

tremendous amount of pressure

tremendous amount of pressure